You can further aggregate the events over longer windows and remove some information like port numbers for rejected traffic. VPC Flow records are already aggregated, and you can configure aggregation intervals in AWS. Another way to reduce data volume is to aggregate similar events. You can begin by focusing on passing events containing flows to/from public IPs only this will drop all East-West traffic events (communication between private IPs as defined in RFC1918). Let’s dive a bit deeper into some of the Pack functionality and the Function Groups, starting from data reduction. Once you are done with configuration on the AWS side, enable the matching Stream input (called Amazon SQS or Amazon Firehose in the Stream UI).Ī Closer Look at Reduction, Aggregation, and Enrichment

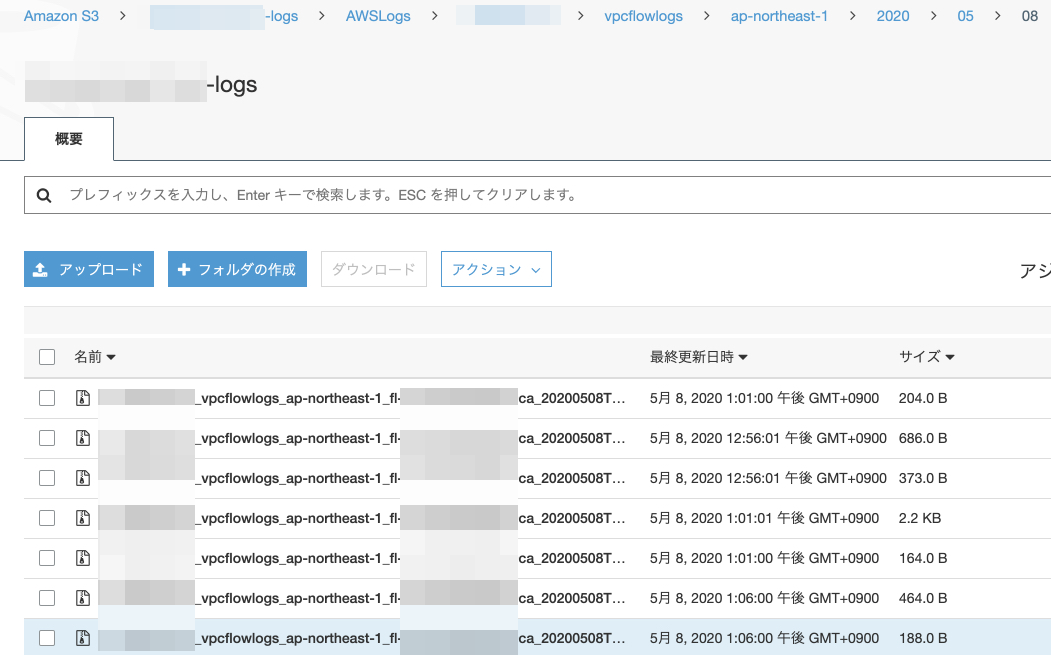

If you are using the S3 method, you will benefit from hooking up AWS SQS to avoid scanning S3 for what’s new on a schedule. Follow these AWS instructions to publish the logs to S3, or send them to AWS Kinesis Data Firehose. Configure VPC Flow Logs to be sent to Stream. Create a Route from the source where your logs are coming from (e.g., S3, Kinesis Firehose) to your security tool, and select the Pack’s pipeline called VPC Flow Logs for Security Teams in the dropdown list for the Pipeline field. Create a Route in Stream and attach the Pack to the Route. On the screenshot below you can see the Function groups that have comments above them describing what each group does, and whether a group is optional or required for the pack to function. Select the VPC Flow Logs sample that comes with the Pack and experiment with enabling and disabling Function Groups to see what the events look like when you toggle optional groups on and off. Select the transformations you’d like to apply, such as data reduction, enrichment, and format changes. If you haven’t used Packs yet, this is a good overview. Add this pack to your Stream or Stream Cloud environment. Add the VPC Flow Logs Pack for Security Teams to your Stream environment. The steps to configure Cribl Stream and VPC Flow Logs are:

We’ll use AWS VPC Flow Logs as an example here, but the logic can be applied to other cloud flow logs and security data sources. So what can you do? In this post, I’ll share a practical approach using Stream to simultaneously add context to logs while reducing their volume. But if you start enriching already voluminous flow logs, the volume will go up even more. If the VPC Flow Logs are not enriched with host information at the time the logs are collected, figuring out the meaning of those IPs can be very difficult later. That container or instance might have existed for a brief moment last Friday. You cannot just look up which container or instance had a specific IP address.

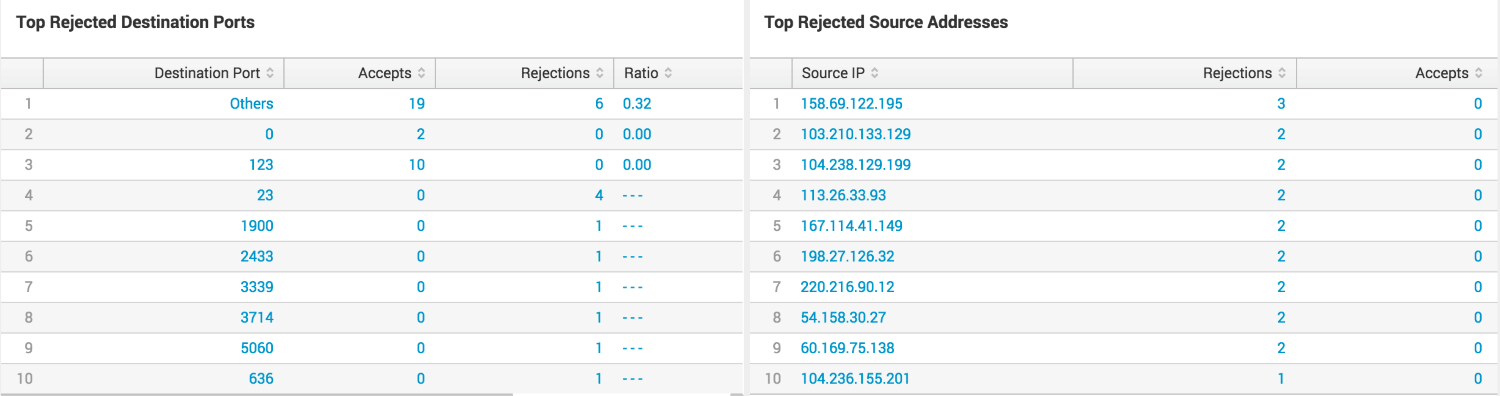

Even if you start analyzing VPC Flow Logs, how easy is it to figure out what each IP address means in your environment? In a cloud environment where many things are ephemeral, the issue with context becomes even bigger. If you’re not looking at what’s happening on your network in the cloud, it’s easy to miss anomalous network traffic patterns that can alert you to potentially malicious activities like data exfiltration, lateral movement attempts, communication with a command-and-control (C2) hosts, and so on.Īnother challenge I consistently see is the lack of context. And you ask yourself: “Can I afford sending that much data to my security tool (Splunk or Elasticsearch, SIEM, UBA, etc.)?” The answer can often be no, especially if your tools are licensed based on data volume or you require extra infrastructure to run the tool on. But when you go through the list of AWS log sources, you inevitably get to VPC Flow Logs that can easily be several times larger than all your other AWS logs combined. But are you currently monitoring and analyzing all of those data sources? For example, if you use AWS, you likely started from looking at CloudTrail logs, AWS Config, maybe S3 access logs. You cannot just not monitor and analyze your security data sources in the cloud, so you already have some sort of solution in place. After all, that’s what the cloud providers are saying: it’s a shared security responsibility model, and security teams need to do their part. Cloud providers help with visibility somewhat, but that is just not enough for security teams. I’ve also seen a large percentage of newly launched companies go with cloud services almost exclusively, limiting their on-premises infrastructure to what cannot be done in the cloud - things like WiFi access points in offices or point of sale (POS) hardware for physical stores.Īs a result, security teams at these organizations need better visibility into what’s actually going on in their cloud environments. In the last few years, many organizations I worked with have significantly increased their cloud footprint.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed